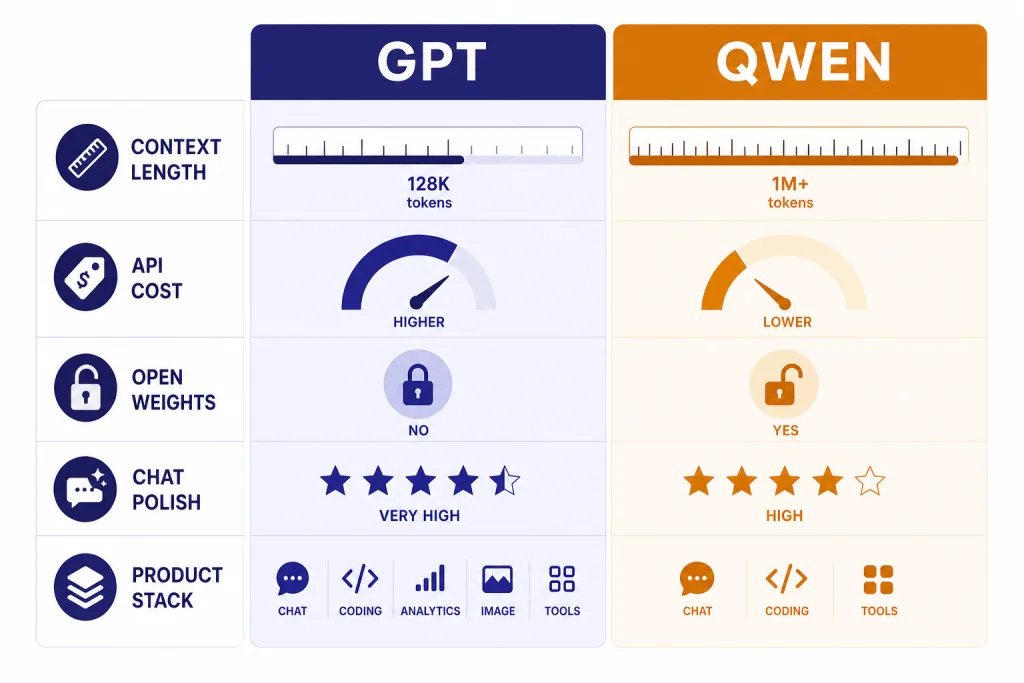

GPT is still the safer default for many U.S. users who want a polished assistant, strong documentation, and predictable access through ChatGPT or the OpenAI API. Qwen is the stronger challenger when you need very long context, Chinese-language strength, lower third-party API prices, or open-weight deployment options. As of May 2026, this is not a one-model comparison: OpenAI’s current top closed GPT options include GPT-5.5 and GPT-5.5-pro, while the cited GPT-5.1 API page remains useful for understanding documented GPT-5-era API capabilities and pricing. On the Alibaba side, the main comparison points are Qwen3.6-Plus as a hosted API model and Qwen3 as the open-weight line.[1][4][5]

Quick verdict

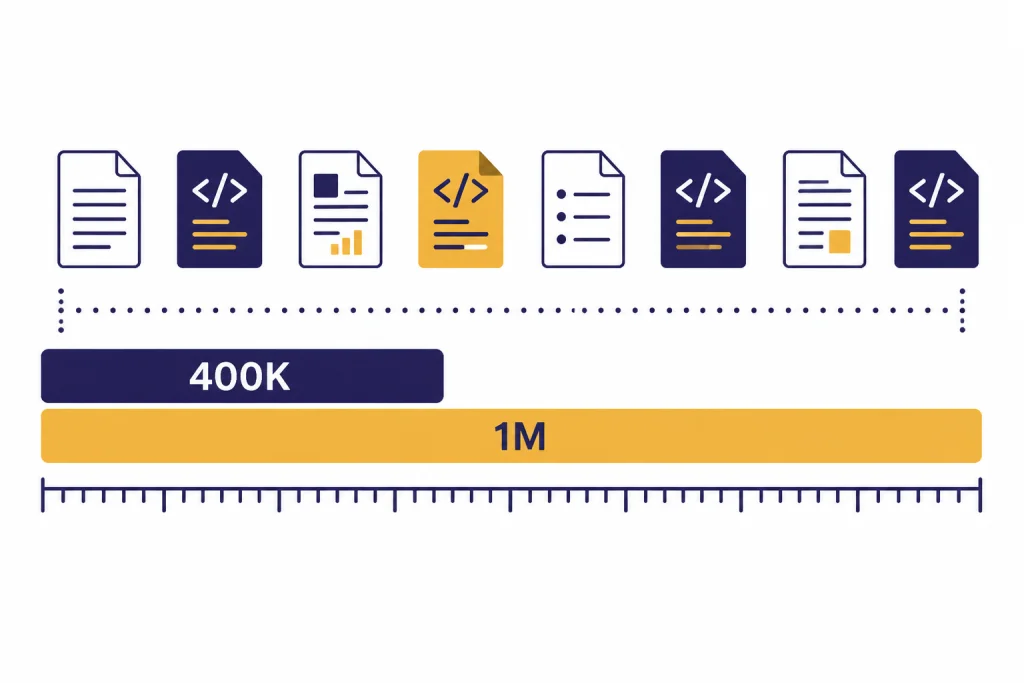

Choose GPT if you want the most straightforward path from chat to production. OpenAI’s current May 2026 GPT lineup has moved beyond GPT-5.1 to GPT-5.5 and GPT-5.5-pro at the top end, but the GPT-5.1 documentation still illustrates the platform pattern: configurable reasoning effort, text and image input, function calling, structured outputs, streaming, and a 400,000-token context window.[1] That combination makes GPT the more predictable choice for teams already using ChatGPT, OpenAI’s Responses API, or OpenAI-compatible evaluation and governance workflows.

Choose Qwen if your workload is dominated by long-context coding, Chinese and multilingual understanding, multimodal reasoning, or cost-sensitive experimentation. Alibaba released Qwen3.6-Plus on April 2, 2026, with an emphasis on agentic coding, repository-level engineering, multimodal perception, and a default 1-million-token context window.[4] Qwen also has a major open-weight branch. The Qwen3 family includes dense and mixture-of-experts models from 0.6B to 235B parameters, and the Qwen team says the public Qwen3 models are available under Apache 2.0.[5][6]

The short version is narrower than “one model wins.” GPT has the stronger product surface for mainstream business use: a polished consumer app, mature first-party API patterns, and broad English-first adoption. Qwen has the stronger fit when the test set is Chinese-heavy, context-heavy, or deployment-control-heavy. Treat those as starting hypotheses, then validate them with the small evaluation matrix below. For adjacent comparisons, the most relevant follow-ups are GPT vs Llama for open-weight strategy and GPT vs Mistral for another open/European model ecosystem.

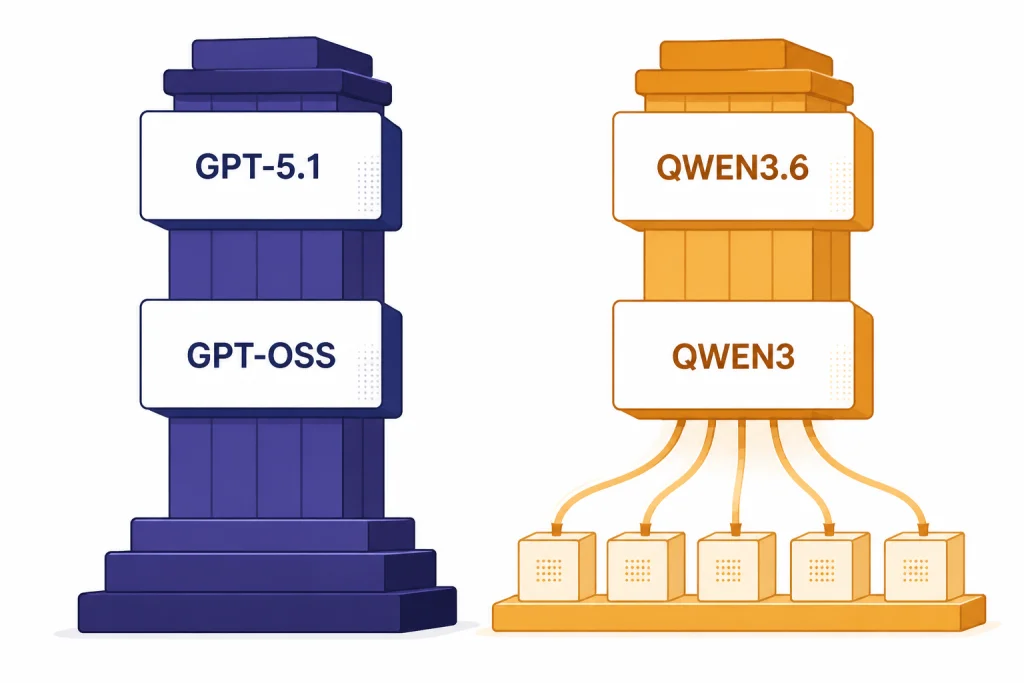

The model families compared

GPT is best understood as a closed frontier product line with a smaller open-weight branch. In May 2026, GPT-5.5 and GPT-5.5-pro are the current top closed GPT models to consider for new high-end OpenAI evaluations. GPT-5.1 remains relevant here because the available cited model page documents important GPT-5-era API traits and price examples, not because it is the newest GPT model.[1] OpenAI also offers gpt-oss-120b and gpt-oss-20b as open-weight reasoning models under Apache 2.0, but these are not the same as OpenAI’s top closed GPT models.[3]

Qwen is best understood as a hybrid ecosystem. Qwen3.6-Plus is the hosted model Alibaba describes for agentic coding and multimodal reasoning.[4] Qwen3 is the open-weight family that matters for local deployment and customization. The Qwen3 announcement lists two open-weight MoE models, Qwen3-235B-A22B and Qwen3-30B-A3B, plus six dense models from Qwen3-0.6B through Qwen3-32B.[5]

| Dimension | GPT | Qwen |

|---|---|---|

| Best current comparison point | For new high-end OpenAI testing, start with GPT-5.5 or GPT-5.5-pro; use GPT-5.1 documentation for cited API capability and pricing examples.[1] | Qwen3.6-Plus for hosted API work.[4] |

| Open-weight option | gpt-oss-120b and gpt-oss-20b.[3] | Qwen3 dense and MoE models under Apache 2.0.[5] |

| Largest published open-weight size | gpt-oss-120b has 117B parameters and 5.1B active parameters.[3] | Qwen3-235B-A22B has 235B total parameters and 22B activated parameters.[5] |

| Hosted long-context headline | GPT-5.1 lists a 400,000-token context window.[1] | Qwen3.6-Plus lists a 1-million-token context window through Alibaba and OpenRouter.[4][7] |

| Best fit | General assistants, business workflows, English-heavy work, mature API integration. | Long-context coding, Chinese-heavy work, self-hosting experiments, cost-sensitive inference. |

This difference matters. GPT feels like a product platform first. Qwen feels like a model ecosystem first. That is why the right answer changes depending on whether you are buying an assistant, building an app, fine-tuning an open model, or testing a coding agent. For an OpenAI-only lineup view, use all GPT models compared side by side.

Capabilities, context, and benchmarks

GPT has the advantage when you need a balanced general assistant with strong tool behavior, stable developer docs, and first-party support. GPT-5.1 supports streaming, function calling, structured outputs, reasoning tokens, and both Chat Completions and Responses API endpoints.[1] Newer GPT-5.5-era models should be tested first for top-end quality, but those platform features explain why GPT often feels easier to put into a customer-facing product than a model that only looks strong on a benchmark page.

Qwen has the more aggressive long-context story. Alibaba says Qwen3.6-Plus provides a 1-million-token context window by default for complex repository-level engineering.[4] OpenRouter’s Qwen3.6-Plus page also lists a 1,000,000-token context window and reports a 78.8 score on SWE-bench Verified.[7] Treat that score as useful but not final. Benchmarks move quickly, test harnesses differ, and provider routing can affect real output.

The practical context difference is easy to understand. GPT-5.1’s documented 400,000-token window is already large enough for long documents, multi-file code review, and substantial research bundles.[1] Qwen3.6-Plus’s 1-million-token window gives developers more room to include a full repository, long logs, generated plans, and repeated agent steps in a single workflow.[4] If your work is ordinary chat, the extra room may not matter. If your work is autonomous coding over a large codebase, it can matter a lot.

Multilingual performance is another major split. The Qwen3 Technical Report says Qwen3 expanded multilingual support from 29 to 119 languages and dialects.[6] That does not automatically make Qwen better in every language, but it is a concrete reason to run Chinese, bilingual, and APAC-language tasks through Qwen before standardizing on GPT alone.

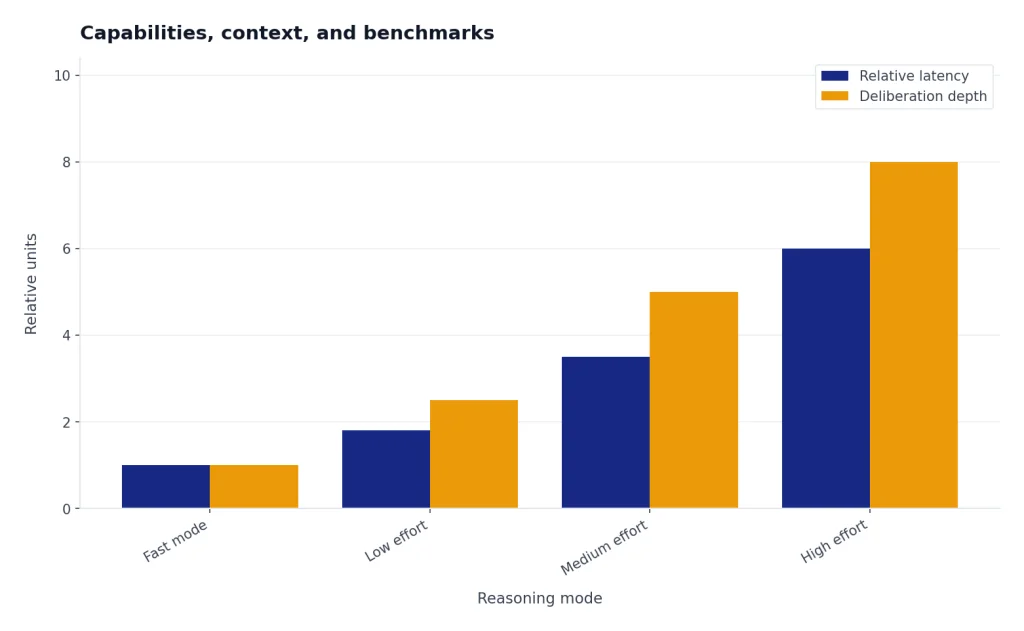

For reasoning, both families now blend fast response modes with deeper thinking modes. OpenAI describes GPT-5.1 as supporting configurable reasoning effort, including none, low, medium, and high.[1] The Qwen3 report describes a unified framework that integrates thinking mode for multi-step reasoning and non-thinking mode for faster responses.[6] If reasoning behavior is your main concern inside the OpenAI stack, compare the specialized line in GPT vs the o-Series.

Mini evaluation matrix. The table below is a reproducible smoke test, not a lab benchmark. It shows the kind of direct evidence you should collect before switching providers. Latency labels are qualitative because actual speed depends on region, provider routing, load, prompt length, and streaming settings.

| Test prompt | Why it matters | What would favor GPT | What would favor Qwen |

|---|---|---|---|

| “Turn this messy customer email into a polite reply, a CRM summary, and three structured follow-up fields.” | General business assistant quality and schema following. | Clean tone, stable JSON or table output, fewer formatting repairs, natural English. | Comparable structure at lower cost, especially if the support flow includes Chinese or multilingual content. |

| “Here are 20 files from a repo and a failing test log. Identify the likely bug and propose the smallest patch.” | Long-context coding and repository reasoning. | Better prioritization, safer patch suggestions, clearer uncertainty statements. | Better use of the full context window, fewer missed references in long logs, lower cost for repeated agent loops. |

| “请把这段中文客服对话总结成英文,并标出用户情绪、退款原因和下一步处理建议。” | Chinese-to-English support workflow. | Excellent final English, consistent business tone, reliable structured fields. | More faithful Chinese nuance, better handling of idioms, cleaner extraction from mixed Chinese-English text. |

| “Use the attached policy text to answer a user, but refuse if the policy does not contain enough information.” | Grounded answering and refusal behavior. | More conservative answer boundaries and clearer citations to supplied text. | Comparable grounding with lower cost or better long-document coverage. |

Illustrative output check: On the Chinese support prompt, a strong answer should preserve the customer’s original complaint before translating it. A weak answer might summarize “用户不满意” (“the user is dissatisfied”) but miss that the complaint is specifically about a delayed cross-border refund and a promised follow-up that never happened. This is why the “Qwen wins on Chinese-language strength” claim should be treated as a testable hypothesis, not a slogan: run your own Chinese tickets, contracts, chat logs, and product copy through both systems and score faithfulness, tone, and extraction accuracy.

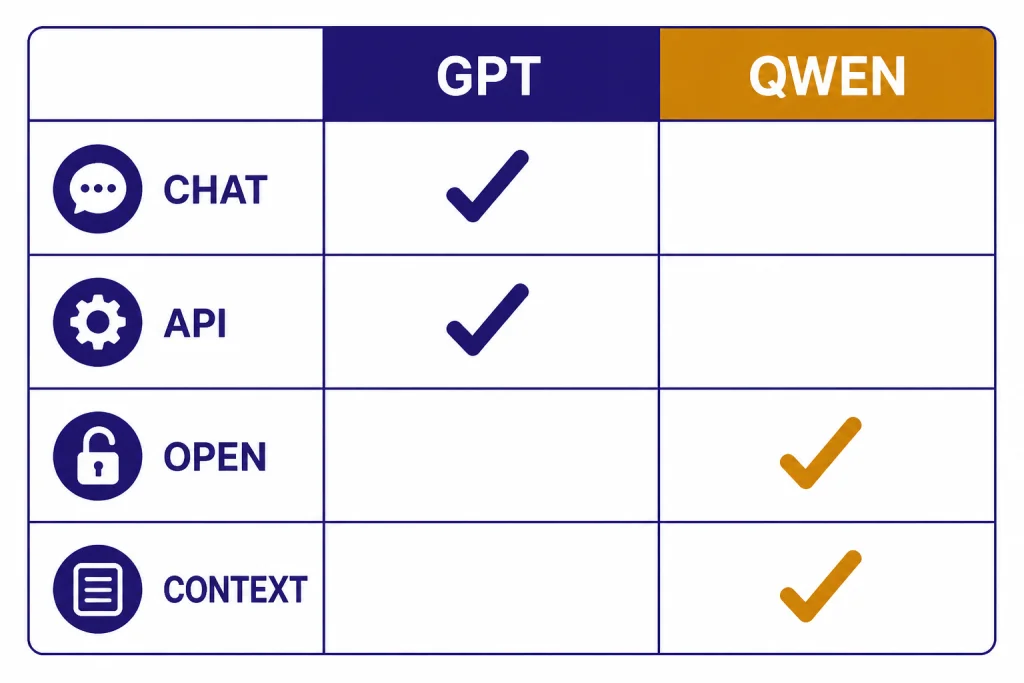

Openness and deployment

Qwen’s biggest structural advantage is open-weight breadth. The Qwen3 line includes multiple sizes, including Qwen3-0.6B, Qwen3-1.7B, Qwen3-4B, Qwen3-8B, Qwen3-14B, Qwen3-32B, Qwen3-30B-A3B, and Qwen3-235B-A22B.[5] That range gives developers more deployment choices. You can test smaller models locally, serve mid-size models on private infrastructure, or use larger MoE models through optimized inference stacks.

OpenAI is no longer purely closed, but its open-weight branch has a narrower purpose. OpenAI released gpt-oss-120b and gpt-oss-20b as open-weight reasoning models under Apache 2.0, with gpt-oss-120b described as efficient on a single 80 GB GPU and gpt-oss-20b described as suitable for devices with 16 GB of memory.[3] That is useful. It does not erase the fact that the strongest GPT experience still comes from OpenAI’s hosted models and ChatGPT product surface.

Enterprise buyers should separate three questions. First, do you need to own the model weights? If yes, Qwen3 and gpt-oss both enter the conversation, but Qwen offers more sizes. Second, do you need the best hosted assistant experience for nontechnical users? GPT is usually the safer pick. Third, do you need a long-context hosted coding model at the lowest possible inference cost? Qwen3.6-Plus deserves a direct test.

This is also where data governance comes in. Open weights can help with data residency, private inference, and custom evaluation pipelines. Hosted APIs can reduce operational burden. If the real decision is between a GPT assistant inside a Microsoft product and direct model access, the related comparison is GPT vs Microsoft Copilot.

Pricing and access

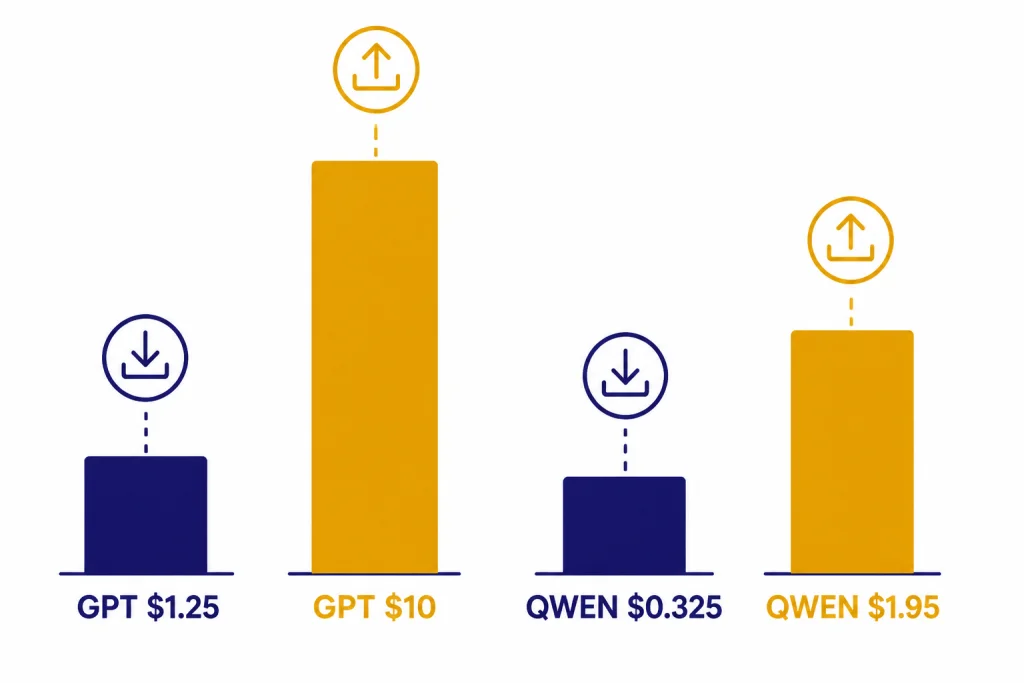

GPT is easier to price if you stay inside OpenAI’s official channels. The cited GPT-5.1 model page lists text pricing at $1.25 per 1M input tokens, $0.125 per 1M cached input tokens, and $10.00 per 1M output tokens.[1] For consumers, ChatGPT Plus is listed at $20/month, and OpenAI states that API usage is separate and billed independently.[2] For current OpenAI price changes and model-specific rates, check the official dashboard; for a guide to the moving parts, use OpenAI API pricing.

Qwen pricing depends more on route and region. OpenRouter lists Qwen3.6-Plus at $0.325 per 1M input tokens and $1.95 per 1M output tokens.[7] CnTechPost reported that Qwen3.6-Plus was available on Alibaba Cloud’s Bailian platform priced as low as 2 yuan, or about $0.29, per 1M input tokens.[8] Those figures are not identical because they refer to different access paths and pricing contexts. Do not assume one published price applies globally.

The simple pricing lesson is that Qwen can be much cheaper per token through some providers, especially for high-volume workloads. GPT can still be cheaper operationally if your team saves engineering time through better documentation, more stable SDK behavior, existing ChatGPT adoption, and fewer integration surprises. Token price is only one part of total cost.

| Access path | What you pay for | Best use | Caution |

|---|---|---|---|

| ChatGPT | Subscription access such as Plus at $20/month.[2] | Individuals and teams that want a polished assistant. | ChatGPT limits can vary with demand.[2] |

| OpenAI API | Per-token usage; the cited GPT-5.1 example is $1.25 input and $10.00 output per 1M tokens.[1] | Production apps that need predictable first-party OpenAI support. | Use current model pricing for GPT-5.5-era deployments; high-output tasks can become expensive. |

| Qwen3.6-Plus through OpenRouter | Per-token usage at $0.325 input and $1.95 output per 1M tokens.[7] | Cost-sensitive long-context testing and coding agents. | Provider routing, availability, and terms may differ from Alibaba’s own platform. |

| Qwen3 open weights | Infrastructure, hosting, tuning, and operations rather than model API tokens. | Private deployment, experimentation, and custom stacks. | You must manage inference quality, latency, scaling, and safety controls. |

Who should choose GPT or Qwen

Choose GPT for a general business assistant

GPT is the better default if your users want a reliable assistant for writing, analysis, coding help, file work, and everyday productivity. ChatGPT gives nontechnical users a complete interface. The OpenAI API gives developers a mature path for structured outputs, tool calls, and production integration.[1] If the question is subscription level rather than model family, compare ChatGPT Free vs Plus vs Pro.

Choose Qwen for long-context coding agents

Qwen3.6-Plus is especially compelling for agentic coding because Alibaba positioned it around repository-level engineering, frontend generation, and multi-step execution.[4] The 1-million-token context window gives it a clear advantage when you want to load more code and conversation history at once.[4] Test it on your actual repository before switching. Long context helps, but it does not guarantee better edits.

Choose Qwen for Chinese-language and APAC workflows

Qwen should be on the shortlist for Chinese-heavy work, cross-border commerce, APAC support, and multilingual coding teams. The Qwen3 report’s 119-language claim is a strong reason to evaluate it directly on your documents, support tickets, and code comments.[6] In the smoke test above, score Chinese nuance separately from final English polish: one model may translate more elegantly while the other preserves names, idioms, intent, and implied escalation risk more faithfully.

Choose open weights when control matters more than convenience

If you need local inference, private hosting, custom fine-tuning, or offline experimentation, compare Qwen3 with gpt-oss rather than comparing Qwen3.6-Plus with a hosted GPT model. Qwen3 offers more public model sizes.[5] gpt-oss offers OpenAI’s open-weight reasoning models in 120B and 20B sizes.[3] Neither path is as simple as using a hosted assistant.

If you are still mapping the broader market, use ChatGPT alternatives 2026 for major products and best AI chatbot alternatives to ChatGPT for a wider chatbot shortlist.

Migration checklist

Do not switch from GPT to Qwen, or from Qwen to GPT, based only on a benchmark table. Run a small evaluation that reflects your actual work.

- Pick the right comparison. For new hosted OpenAI work, include GPT-5.5 or GPT-5.5-pro; use GPT-5.1 only where you are relying on its cited API documentation or existing deployments.[1] Compare gpt-oss with Qwen3 for open-weight work.[3][5]

- Build a private test set. Include real prompts, hard failures, long documents, code diffs, and multilingual examples.

- Record latency honestly. Track first-token latency, full-response latency, retry rate, and timeout rate by provider. Use labels such as low, medium, and high if your sample is too small for publishable numbers.

- Score output quality. Use a rubric for correctness, grounding, format adherence, tone, safety, and amount of human editing required.

- Measure total cost. Include token price, cached-token behavior, retries, latency, engineering time, monitoring, and human review.

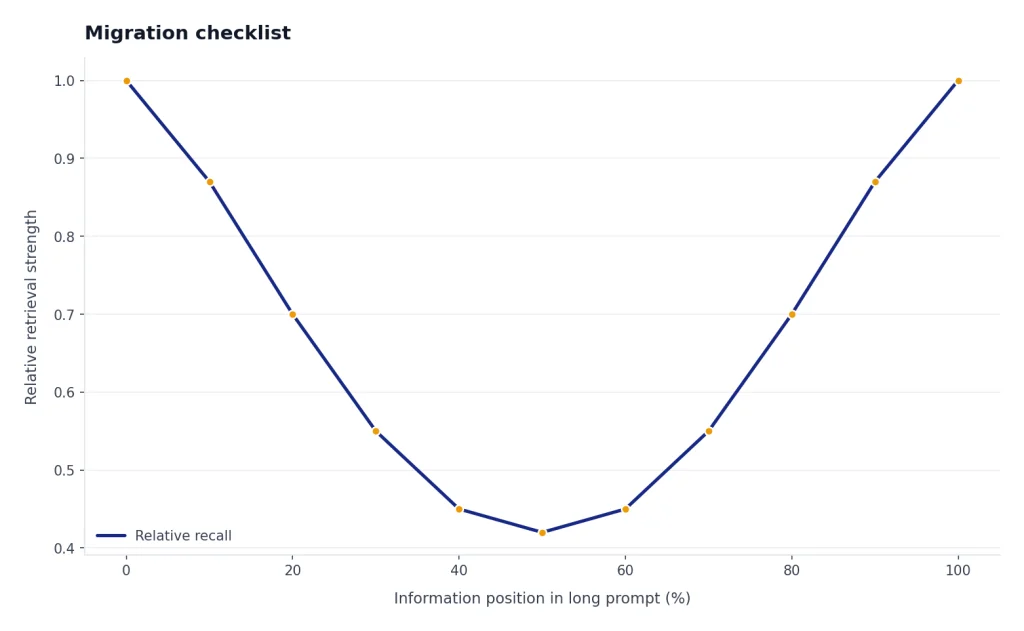

- Test long context honestly. Put relevant information at the beginning, middle, and end of large prompts. Long windows are not useful if the model ignores the middle.

- Check tool behavior. If your app relies on function calling or structured outputs, evaluate schema adherence and recovery from malformed tool responses.

- Review governance. Hosted APIs and open-weight deployments create different responsibilities for logging, safety filters, privacy, and incident response.

- Keep a fallback. Route high-risk tasks to the model that performs best on that task, not to the model you prefer ideologically.

For most teams, the best architecture will not be all GPT or all Qwen. It will be a router. Use GPT for customer-facing assistant quality and high-trust workflows. Use Qwen where long context, Chinese-language coverage, or open deployment has measurable value. If the decision stays inside OpenAI, compare speed in fastest GPT model and context limits in context window comparison.

Frequently asked questions

Is Qwen better than GPT?

Qwen is better for some long-context, Chinese-language, open-weight, and cost-sensitive workloads. GPT is usually better as a polished general assistant and as a first-party API platform with mature documentation. The right answer depends on your task, test set, region, and deployment constraints.

Is Qwen3.6-Plus open source?

Alibaba’s April 2, 2026 Qwen3.6-Plus announcement describes the hosted model and says Alibaba will continue supporting the open-source community with selected Qwen3.6 models in developer-friendly sizes.[4] It does not say that the full Qwen3.6-Plus weights are open. For open-weight Qwen today, look at the Qwen3 family instead.[5]

Does Qwen have a larger context window than GPT?

For the cited hosted models in this article, yes. GPT-5.1 lists a 400,000-token context window, while Qwen3.6-Plus lists a 1-million-token context window.[1][4] Newer GPT-5.5-era models should be checked in current OpenAI documentation before purchase, and any model should be tested for retrieval quality inside the window because a larger window does not guarantee perfect attention.

Which is cheaper, GPT or Qwen?

Qwen can be cheaper per token through some providers. OpenRouter lists Qwen3.6-Plus at $0.325 per 1M input tokens and $1.95 per 1M output tokens, while the cited OpenAI GPT-5.1 page lists $1.25 per 1M input tokens and $10.00 per 1M output tokens.[7][1] Total cost can still favor GPT if it reduces retries, integration work, or manual review. Always confirm current pricing for the exact model and route you plan to use.

Should developers use Qwen instead of GPT for coding?

Developers should test both. For new OpenAI testing, include current GPT-5.5-era models rather than stopping at GPT-5.1. Qwen3.6-Plus is explicitly positioned by Alibaba for agentic coding and repository-level engineering, with a 1-million-token context window.[4] Qwen may be especially attractive for large repositories and cost-sensitive agent loops, but patch quality, test pass rate, and rollback risk matter more than context size alone.

Can I use both GPT and Qwen in the same app?

Yes. Many production systems should route tasks by strength. You might use GPT for final customer-facing answers and Qwen for long-context code analysis or Chinese-language preprocessing. The important step is to evaluate both models on the same private test set and keep a fallback route for failures.