DALL-E is the easier choice if you want a polished image from a plain-English prompt with minimal setup. Stable Diffusion is the better choice if you need lower-cost experimentation, local control, custom models, or production workflows that you can tune. The cost comparison is not one clean winner: DALL-E 3 has simple per-image API pricing, while Stable Diffusion can mean either open-weight self-hosting or paid hosted APIs from Stability AI. Quality also depends on the job. DALL-E tends to win on convenience and prompt interpretation. Stable Diffusion wins on customization, repeatability, and control.

Short answer

For most casual users, DALL-E is better because it is simpler. You describe the image, ChatGPT or the API rewrites the prompt when needed, and you get a finished result without managing models, checkpoints, samplers, GPUs, or workflows. OpenAI says DALL-E 3 is available to ChatGPT users and developers through the API, and its product page emphasizes stronger adherence to text prompts than earlier DALL-E versions.[2]

For serious image pipelines, Stable Diffusion often has the stronger long-term value. Stability AI released Stable Diffusion 3.5 as an open model family with Large, Large Turbo, and Medium variants, and says the models can be downloaded from Hugging Face with inference code on GitHub.[5] That changes the economics. You can pay a hosted API per image, or you can self-host and shift the cost to hardware, utilization, engineering time, and maintenance.

As of May 2026, OpenAI’s current image lineup also includes newer GPT Image models, including gpt-image-2. That matters because many people casually say “DALL-E” when they mean any OpenAI image generator. This article compares DALL-E 3 pricing and workflow against Stable Diffusion-style options; if you are choosing across the full OpenAI image stack, treat GPT Image as a separate option with a different pricing model.

The practical answer is this: choose DALL-E for fast, clean, general-purpose images. Choose Stable Diffusion for volume, custom styles, local generation, fine-tuning, and controlled production.

Cost comparison

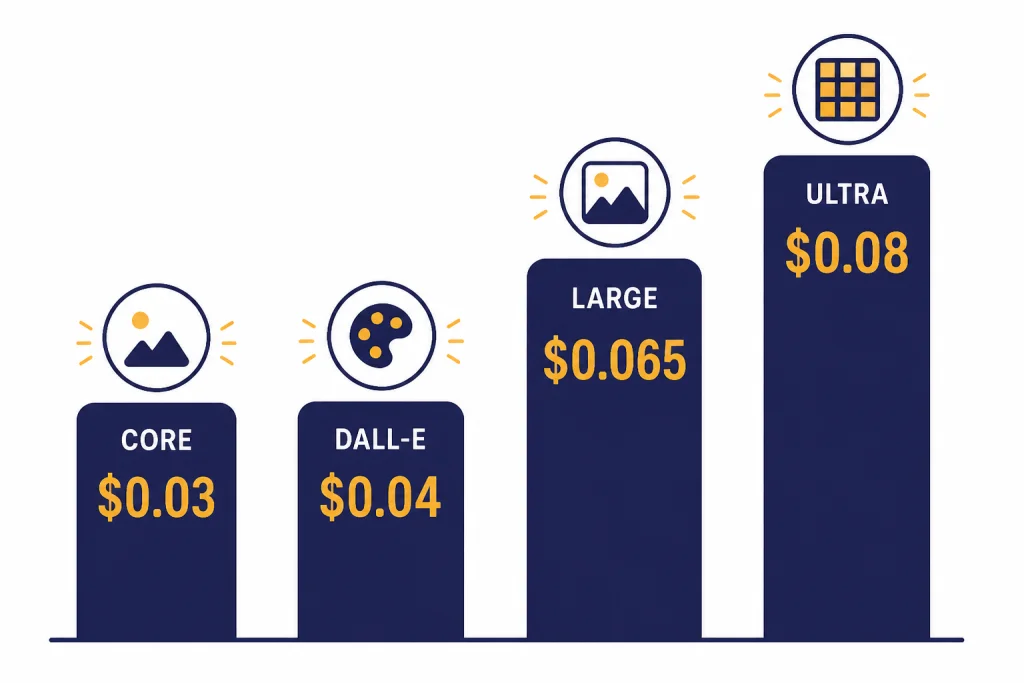

DALL-E 3 is easier to price because OpenAI publishes per-image API prices by size and quality. The DALL-E 3 API table historically uses 1024×1024, 1024×1792, and 1792×1024 sizes, not the 1024×1536 and 1536×1024 sizes used by some GPT Image workflows. DALL-E 3 standard costs $0.04 for a 1024×1024 image and $0.08 for either 1024×1792 or 1792×1024.[1] DALL-E 3 HD costs $0.08 for 1024×1024 and $0.12 for either 1024×1792 or 1792×1024.[1]

Stable Diffusion is not one single buying option. It is better to separate three categories:

- Open weights / self-hosted Stable Diffusion: you download model weights such as Stable Diffusion 3.5 Large or Medium and run them on your own local GPU or cloud GPU. The model may be free to use for qualifying users under Stability AI’s license, but your real cost is compute, setup, monitoring, storage, and staff time.[5][9][10]

- Stability hosted APIs: you pay for hosted generation through Stability’s API products or credit system. This is closer to DALL-E operationally, because you avoid running the model yourself.

- Stability-branded image products: names such as Stable Image Core and Stable Image Ultra are hosted products, not the same thing as downloading and operating a Stable Diffusion checkpoint locally.[6]

Third-party pricing trackers that cite Stability’s developer pricing list Stable Image Core at $0.03 per image, Stable Diffusion 3.5 Medium at $0.035, Stable Diffusion 3.5 Large Turbo at $0.04, Stable Diffusion 3.5 Large at $0.065, and Stable Image Ultra at $0.08.[7][8] Treat these as hosted API reference points, not as the cost of self-hosted Stable Diffusion.

| Option | Typical cost basis | Representative price | Best cost fit |

|---|---|---|---|

| DALL-E 3 standard | OpenAI API per image | $0.04 for 1024×1024; $0.08 for 1024×1792 or 1792×1024 | Simple paid generation with predictable billing |

| DALL-E 3 HD | OpenAI API per image | $0.08 for 1024×1024; $0.12 for 1024×1792 or 1792×1024 | Higher-quality outputs without model operations |

| Stable Image Core | Stability hosted API credits | Reported at $0.03 per generation | Lower-cost hosted image generation |

| Stable Diffusion 3.5 Large Turbo | Stability hosted API credits | Reported at $0.04 per generation | Fast hosted generation near DALL-E standard square pricing |

| Stable Diffusion 3.5 Large | Stability hosted API credits | Reported at $0.065 per generation | Higher-quality Stable Diffusion output without self-hosting |

| Stable Image Ultra | Stability hosted API credits | Reported at $0.08 per generation | Premium hosted Stability output |

| Stable Diffusion self-hosted | Hardware or cloud compute | No fixed per-image API fee from Stability for local runs | High-volume experimentation and custom workflows when utilization is high |

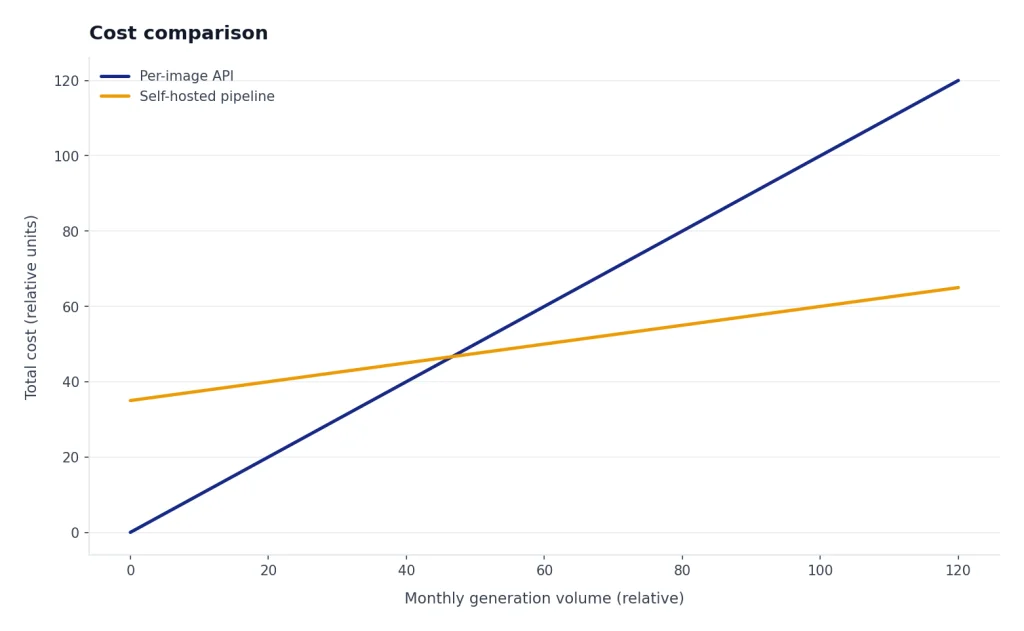

The cheapest path depends on volume and utilization. If you make a few images per week, DALL-E’s simplicity can be worth more than saving a cent or two per generation. If you generate thousands of candidates, train LoRAs, or run many variations before selecting one final image, Stable Diffusion can become cheaper because unsuccessful iterations are not all billed by a closed per-image API.

That caveat is important. A self-hosted cost chart should not be read as a universal crossover point. A team with an idle in-house GPU and a stable workflow may reach low marginal cost quickly. A team renting expensive cloud GPUs, generating only occasionally, or paying engineers to maintain queues and model updates may spend more than it would through a hosted API. For a fair internal estimate, calculate:

- Fixed cost: GPU purchase or monthly cloud reservation, setup time, storage, monitoring, and security review.

- Variable cost: electricity or cloud runtime, retries, upscaling, moderation, and failed generations.

- Throughput: accepted images per hour, not just raw images per hour.

- Utilization: whether the GPU is busy most of the day or sits idle between campaigns.

- Human cost: prompt tuning, model selection, LoRA training, QA, and pipeline maintenance.

There is one more naming trap. Many users say “DALL-E” when they mean OpenAI image generation inside ChatGPT. OpenAI’s GPT Image API is priced by tokens, not by the same DALL-E 3 per-image table. OpenAI listed gpt-image-1 pricing at $5 per 1M text input tokens, $10 per 1M image input tokens, and $40 per 1M image output tokens when it introduced the model in the API.[4] For a strict DALL-E vs Stable Diffusion comparison, use the DALL-E 3 API table above. For a current OpenAI image stack comparison, include GPT Image separately.

Quality comparison

DALL-E quality is strongest when the prompt is a normal human request: “make a product hero image,” “turn this idea into a poster,” or “create a clear educational diagram.” OpenAI says DALL-E 3 is built natively on ChatGPT, so ChatGPT can refine a simple request into a more detailed prompt and help revise the image with follow-up instructions.[2] That is the biggest quality advantage for non-experts. The system does a lot of prompt work for you.

Stable Diffusion quality is strongest when the user controls the pipeline. Stability AI describes Stable Diffusion 3.5 Large as an 8.1 billion parameter model for professional use at 1 megapixel resolution, with Large Turbo as a faster distilled variant and Medium as a 2.5 billion parameter model designed for consumer hardware.[5] Those variants let you choose the quality-speed-control balance instead of accepting one hosted behavior.

Text rendering is closer than it used to be. DALL-E 3 was known for improving prompt following and text-in-image behavior compared with earlier tools. Stable Diffusion 3.5 also emphasizes typography and complex prompt understanding in its model cards.[9] In real work, neither tool should be trusted blindly for final packaging copy, legal disclaimers, medical labels, or financial figures. Generate the composition, then inspect and typeset critical text in a design tool.

Independent benchmark culture also matters. Artificial Analysis describes its text-to-image leaderboard as a human-preference ranking based on votes in an image arena, and notes that proprietary and open models can trade places depending on model and setting.[11] That is a useful reminder: quality is not a single number. A model can be excellent for portraits and weaker for diagrams. It can follow style well and still miss object counts. It can generate beautiful images and still fail brand consistency.

For a cost-and-quality decision, the best test is a small same-prompt bakeoff using your own acceptance standard. Here is a practical mini-test you can run before committing to either system:

- Use the same five prompts in both tools: one product shot, one poster with short text, one diagram, one realistic person-free scene, and one brand-style variation.

- For each prompt, allow a fixed number of attempts, such as one first pass plus two revisions. Do not keep retrying one tool indefinitely.

- Record whether the first image was usable, whether text was correct, how many revisions were needed, and whether the final accepted image required manual editing.

- Calculate cost per accepted image, not just cost per generation. A cheaper model that needs five retries may be more expensive than a higher-priced model that succeeds on the first or second attempt.

| Test prompt | What to inspect | DALL-E expectation | Stable Diffusion expectation |

|---|---|---|---|

| “Create a clean ecommerce hero image for a matte black desk lamp on a warm neutral background.” | Product realism, shadows, composition, editing time | Often strong first-pass polish | Can be excellent with the right model and settings; may need workflow tuning |

| “Design a square poster that says ‘SPRING SALE’ in large readable letters, with flowers and a simple border.” | Exact text, spelling, layout, need for manual typesetting | Good short-text attempt, but still review carefully | Improved typography in newer models, but still review carefully |

| “Make a simple educational diagram showing rainwater flowing from a roof into a barrel and then into a garden.” | Object relationships, arrows, clarity, factual layout | Usually strong at interpreting the plain-language diagram request | Can work well, especially with control workflows or post-editing |

| “Create three images in the same cozy watercolor style for a children’s story about a fox, a teapot, and a moonlit forest.” | Style consistency across multiple outputs | Good for early concepts | Stronger when using seeds, references, LoRAs, or a locked workflow |

This table is a test plan, not a universal benchmark. Your results will vary by prompt, model version, safety settings, image size, sampler, seed, and revision process. The main point is to compare accepted outputs, not cherry-picked favorites.

| Quality dimension | DALL-E | Stable Diffusion |

|---|---|---|

| Prompt interpretation | Very strong for natural-language requests, especially through ChatGPT | Strong, but more dependent on model, prompt style, sampler, and workflow |

| Visual polish | Usually polished out of the box | Can be excellent, but often needs model and settings choices |

| Text in images | Good for short labels, but still requires review | Improved in SD 3.5, but still requires review |

| Style control | Good broad styles, less granular control | Excellent with checkpoints, LoRAs, ControlNet-style workflows, and references |

| Character consistency | Usable for simple iteration, but limited control | Stronger when using trained references, LoRAs, seeds, and structured workflows |

| Production repeatability | Simple, but less transparent | High if you lock model, seed, parameters, and workflow |

Workflow and control

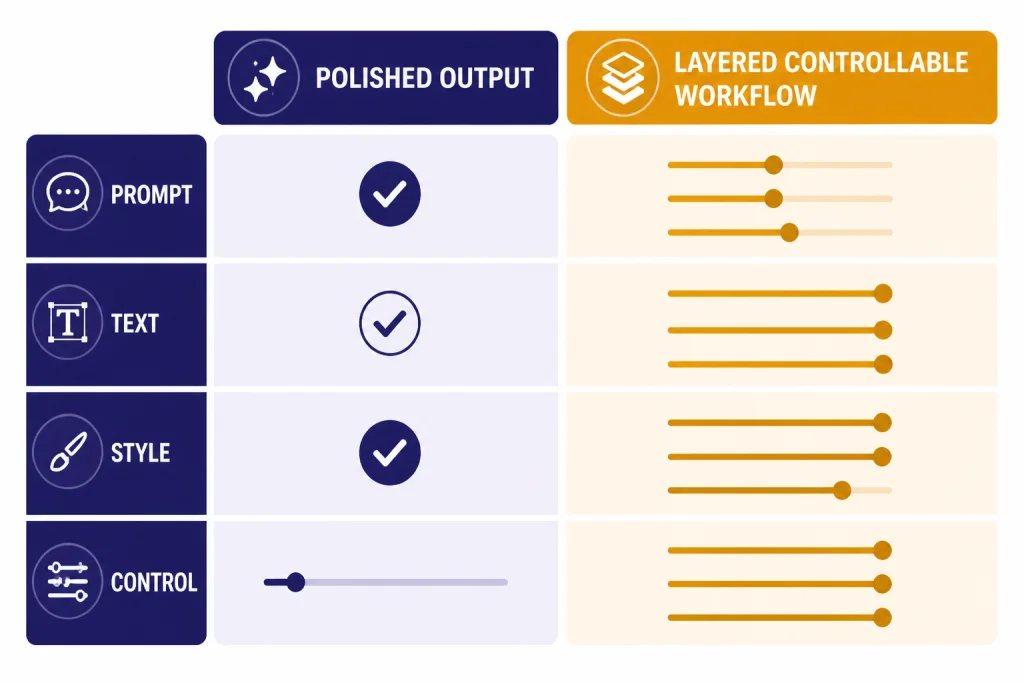

The workflow difference is the real divide. DALL-E is a finished product. Stable Diffusion is a model ecosystem. DALL-E asks you to describe the result. Stable Diffusion asks you to decide how the result should be produced.

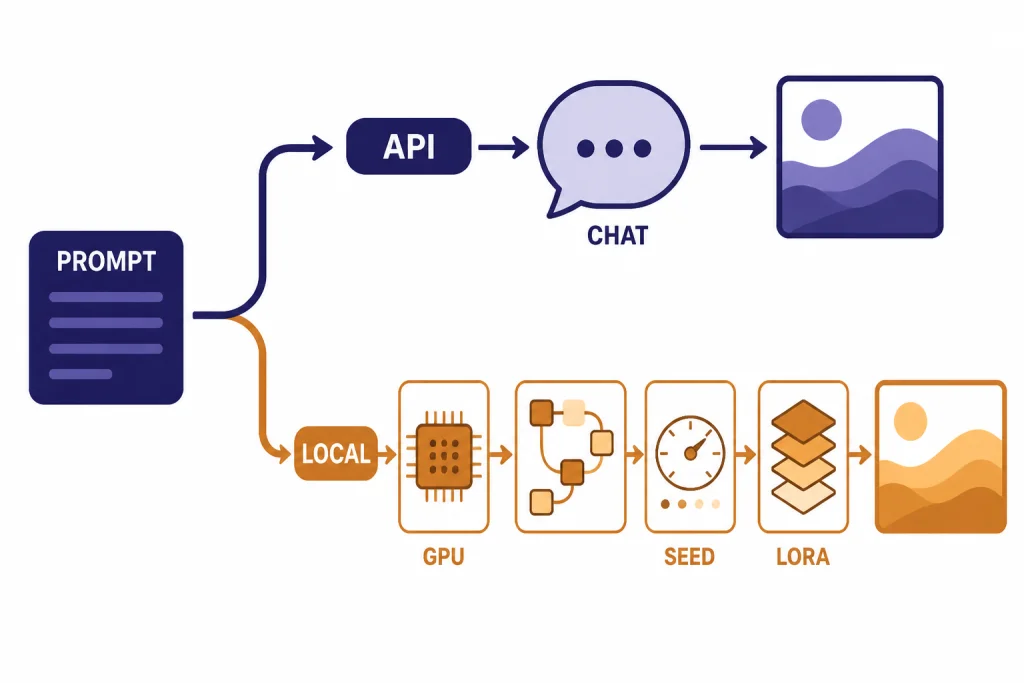

OpenAI’s API reference lists DALL-E 3 under image generation and shows the API endpoint as v1/images/generations.[1] It also notes that DALL-E 3 supports one image per API call, so users who want more than one output should make parallel calls.[3] That works well for apps that need a clean “prompt in, image out” path.

Stable Diffusion supports a more modular approach. The Stable Diffusion 3.5 Large model card points users to ComfyUI for node-based inference and Diffusers or GitHub for programmatic use.[9] That means teams can build repeatable image pipelines with reference images, masks, seeds, schedulers, upscalers, safety checks, and post-processing. This is more work, but it gives designers and developers much more control.

- Choose DALL-E when a marketer, founder, teacher, or editor needs a good image quickly.

- Choose Stable Diffusion when an artist, game studio, ecommerce team, or developer needs repeatable visual systems.

- Choose DALL-E when you do not want to manage GPUs or model files.

- Choose Stable Diffusion when you want local generation, private experiments, custom styles, or fine-tuned outputs.

The hidden cost is operational complexity. With DALL-E, your app mostly handles prompt submission, billing, storage, and review. With self-hosted Stable Diffusion, your app may also handle GPU provisioning, model downloads, dependency updates, queue management, VRAM limits, prompt templates, seed storage, output filtering, and fallback behavior when a workflow breaks.

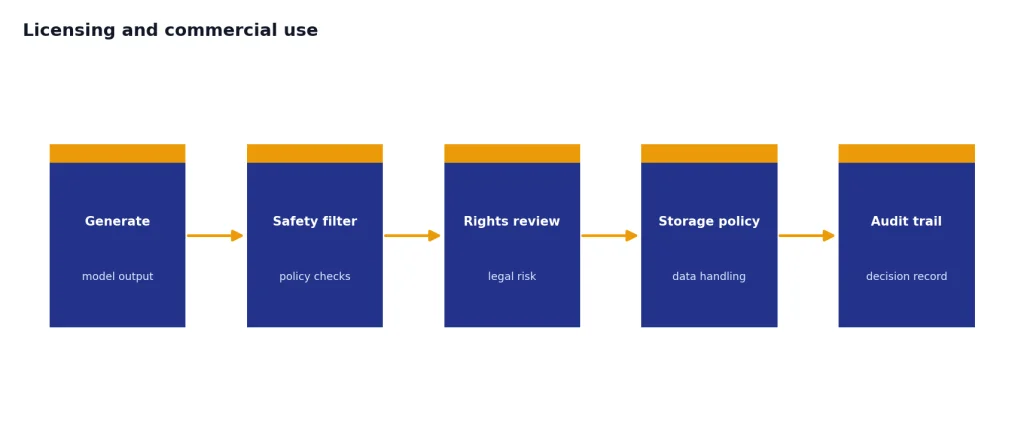

Licensing and commercial use

DALL-E is simpler from a user-facing rights perspective. OpenAI says images created with DALL-E 3 are yours to use and that you do not need OpenAI’s permission to reprint, sell, or merchandise them.[2] That does not remove every legal risk. You still need to avoid trademark misuse, misleading endorsements, privacy violations, and rights issues in uploaded reference images.

Stable Diffusion has more licensing flexibility, but also more responsibility. Stability AI says Stable Diffusion 3.5 is free for non-commercial use and free for commercial use up to $1M in annual revenue under the Stability AI Community License.[5] Hugging Face model cards for Stable Diffusion 3.5 Large and Medium state the same less-than-$1M commercial threshold and direct larger organizations to contact Stability AI for an Enterprise License.[9][10]

The legal difference is practical. With DALL-E, you are buying access to a managed service with OpenAI’s moderation and product constraints. With Stable Diffusion, you may be operating the model yourself. That can help with privacy and customization, but it also means your team owns more of the safety review, content filtering, copyright policy, storage policy, and audit process.

For commercial work, do not treat either tool as a legal shield. Review final assets for logos, recognizable people, copyrighted characters, sensitive claims, and text accuracy. If you use uploaded references, confirm that you have the right to use those references in the generation workflow.

Best use cases

DALL-E is best for fast general-purpose creation

DALL-E works well for blog illustrations, simple ad concepts, social media visuals, classroom graphics, mood boards, presentation images, and early product mockups. It is especially useful when the person requesting the image is not an image-model specialist. The prompt can be conversational, and ChatGPT can help turn an idea into a better visual brief.

Stable Diffusion is best for custom production systems

Stable Diffusion is better for visual systems that need many controlled outputs. Examples include ecommerce product backgrounds, game assets, character sheets, style-consistent campaign images, architecture concepts, sticker packs, and internal design tools. The more you care about seeds, repeatability, LoRAs, masks, or local data handling, the more Stable Diffusion makes sense.

Neither is always the best creative model

DALL-E and Stable Diffusion are not the only options. Midjourney remains a common choice for highly stylized image generation, and video tools are a separate category. The best creative choice can change by medium: still images, brand systems, diagrams, animation, and video each reward different tooling.

Related reading: For other image-model comparisons, see our DALL-E vs Midjourney comparison. For OpenAI billing context, use the OpenAI API pricing guide. If you are comparing open and closed model ecosystems more broadly, the GPT vs Llama breakdown covers the same tradeoff for language models. If your project is moving from still images to video, compare Sora vs Runway and Sora vs Google Veo. If you are comparing broader assistants rather than image engines, see our AI chatbot alternatives list.

Which should you choose

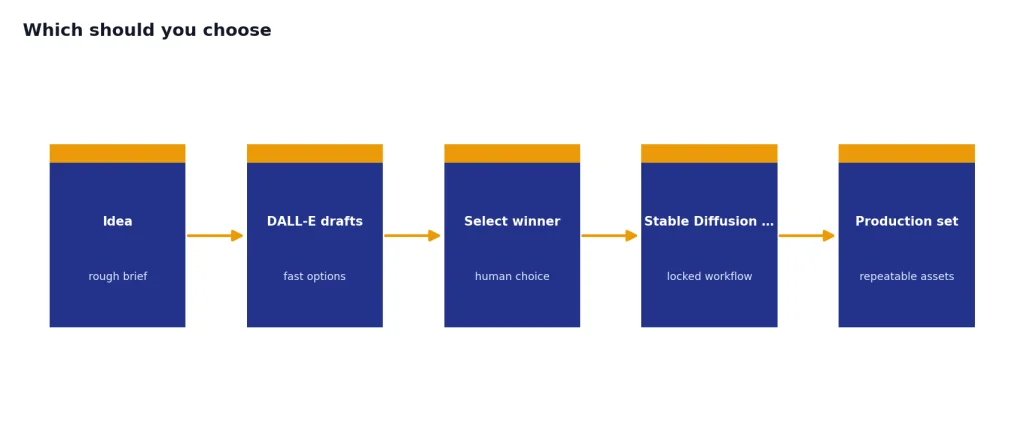

Choose DALL-E if you want the fastest path from idea to usable image. It has straightforward API pricing, strong prompt interpretation, and a managed product experience. It is the safer default for writers, educators, founders, and teams that do not want to operate image infrastructure.

Choose Stable Diffusion if your workflow depends on control. It is the better long-term fit for creators and teams that want to tune style, run local experiments, use specific checkpoints, build repeatable pipelines, or reduce marginal cost at high volume. Its learning curve is higher, but the ceiling is also higher for custom production.

| If you care most about… | Pick | Why |

|---|---|---|

| Ease of use | DALL-E | Less setup and better conversational prompting |

| Lowest experimentation cost at scale | Stable Diffusion | Self-hosting can avoid per-image API billing after setup, if utilization is high enough |

| Simple API billing | DALL-E | Published per-image prices by size and quality |

| Custom brand or art style | Stable Diffusion | Fine-tuning and model ecosystem support deeper control |

| Nontechnical team adoption | DALL-E | Better fit for plain-language requests and quick revisions |

| Local or private workflows | Stable Diffusion | Weights and local tools support self-hosted generation |

| Current OpenAI image-model exploration | GPT Image, not DALL-E 3 alone | As of May 2026, OpenAI’s newer image models, including gpt-image-2, should be evaluated separately from DALL-E 3 pricing |

The best practical setup is often both. Use DALL-E for quick ideation and stakeholder-friendly drafts. Use Stable Diffusion when a winning concept needs to become a repeatable visual system. That mix gives you speed early and control later.

If image generation will live inside ChatGPT rather than a separate design stack, plan procurement around seats, data controls, and review workflows too. Our ChatGPT Free vs Plus vs Pro guide, ChatGPT Plus vs Team comparison, and ChatGPT Team vs Enterprise guide cover those product-level choices.

Frequently asked questions

Is DALL-E cheaper than Stable Diffusion?

Not always. DALL-E 3 has predictable API prices, starting at $0.04 for a standard 1024×1024 image.[1] Stable Diffusion can be cheaper through some hosted models or self-hosting, but self-hosting moves the cost to hardware, cloud compute, utilization, and setup time. Compare cost per accepted image, not just sticker price per generation.

Is Stable Diffusion free for commercial use?

Stability AI says Stable Diffusion 3.5 is free for commercial use for creators and organizations with less than $1M in annual revenue under its community license.[5] Organizations above that threshold should contact Stability AI about an Enterprise License. Always review the current license before shipping commercial work.

Which has better image quality?

DALL-E usually produces polished results faster for general prompts. Stable Diffusion can match or beat it in controlled workflows, especially when using the right checkpoint, LoRA, reference image, and settings. Quality depends on the specific task, not just the model name.

Which is better for developers?

DALL-E is better for developers who want a simple hosted API. Stable Diffusion is better for developers who want control over the pipeline, local inference, custom workflows, or integration with open-source tools. If billing predictability matters more than customization, DALL-E is simpler.

Can DALL-E be self-hosted?

No. DALL-E is a hosted OpenAI model and is not released as downloadable weights. Stable Diffusion is the better option if self-hosting is a requirement.

Should I use DALL-E or Stable Diffusion for brand assets?

Use DALL-E for early concepts and quick drafts. Use Stable Diffusion if you need a consistent brand style across many outputs, especially if your team can build or fine-tune a controlled workflow. For final public assets, review generated images for trademarks, likenesses, text errors, and policy issues.