ChatGPT for doctors is most useful as a supervised clinical and administrative assistant, not as an autonomous medical decision-maker. It can draft patient instructions, summarize guidelines, prepare prior authorization language, translate plain-language explanations, outline differential diagnoses for review, and turn messy notes into structured documentation. The safe version of this workflow starts with de-identification, institutional approval, a privacy review, and clinician verification before anything reaches the chart or the patient. Doctors should treat ChatGPT like a fast resident who writes clearly but needs attending-level review: helpful for saving time, but unable to carry the license, liability, context, or ethical responsibility of a clinician.

Important disclaimer: This article provides operational guidance for evaluating and supervising AI-assisted workflows. It is not medical advice, legal advice, compliance advice, billing advice, or a substitute for your organization’s policies, counsel, privacy officer, coding team, or clinical leadership.

What ChatGPT can do for doctors

Doctors can use ChatGPT to move faster through language-heavy work. The strongest use cases are not “replace the clinician” tasks. They are documentation, summarization, patient communication, education, inbox support, and research triage.

OpenAI introduced OpenAI for Healthcare on January 8, 2026, including ChatGPT for Healthcare, a product positioned for healthcare organizations that want secure AI tools while supporting HIPAA compliance requirements.[1] OpenAI also announced HealthBench on May 12, 2025, as a benchmark for evaluating AI systems in health conversations.[2] Those product and evaluation moves matter because healthcare use is different from casual ChatGPT use. The workflow needs governance, source checking, auditability, and clear accountability.

The American Medical Association reported in March 2026 that 81% of physicians used AI in their practices, more than double the 38% rate reported in 2023.[6] The same AMA coverage listed common professional uses such as summarizing medical research and standards of care, creating discharge instructions, documenting visit notes, generating chart summaries, drafting portal responses, translation services, and assistive diagnosis.[6] That pattern is the right way to think about ChatGPT for doctors: it is strongest when it organizes, drafts, compares, and explains, and weakest when users ask it to decide without full context.

If you are new to the underlying technology, start with what ChatGPT is and how GPT models work. Clinicians do not need to become machine learning engineers, but they should understand that ChatGPT generates probable text. It does not “know” a patient, examine a patient, or accept legal responsibility for a care plan.

Where it fits in the clinical workflow

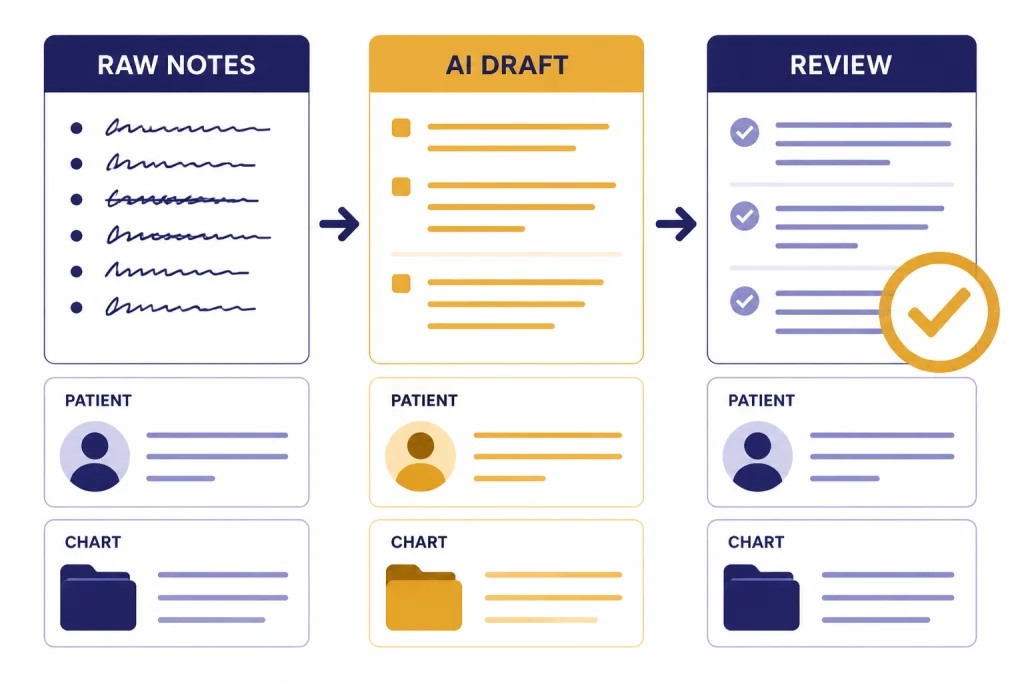

The best place for ChatGPT is between raw clinical material and a human-reviewed output. It can help convert a dictated note into a structured visit summary, a dense guideline into a short teaching memo, or a technical explanation into patient-friendly language. It should not be the final authority on diagnosis, dosing, triage, or disposition.

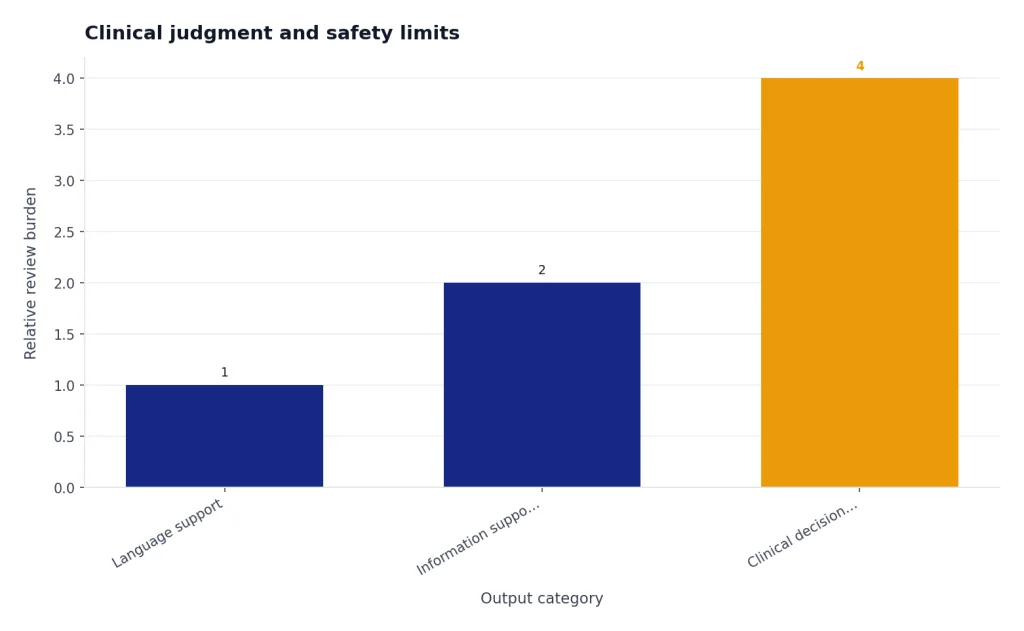

Use the tool differently depending on the task risk. A flu vaccine handout, a no-PHI staff training outline, and a clinic policy summary carry different risks than a differential diagnosis, a medication adjustment, or a triage recommendation. Higher-risk tasks need tighter controls, source review, and documentation of human oversight.

| Use case | Good ChatGPT role | Required clinician control |

|---|---|---|

| Patient education | Draft plain-language explanations and after-visit instructions. | Confirm accuracy, reading level, red flags, and local instructions. |

| Documentation | Turn bullets into a SOAP note, referral letter, or discharge summary draft. | Verify facts against the chart before signing. |

| Research review | Create a reading list, summarize abstracts, and compare guideline positions. | Check primary sources and current local standard of care. |

| Portal messages | Draft empathetic, concise replies for routine issues. | Review tone, medical advice, escalation criteria, and identity-specific details. |

| Differential diagnosis | Suggest possibilities to consider and questions to ask. | Use only as a cognitive aid, not as the decision-maker. |

| Billing support | Organize documentation elements and draft coding queries. | Follow payer rules, compliance policies, and coder review. |

Doctors who already use AI in writing-heavy fields will recognize the pattern. The same drafting discipline used in ChatGPT for lawyers applies here: never outsource judgment, never submit unverified output, and never let a fluent draft hide weak evidence. For data-heavy office work, the discipline is closer to AI support for accountants: the assistant can organize, but the professional signs off.

Privacy, HIPAA, and patient data

The most important practical question is not whether ChatGPT sounds medically competent. It is whether your use is approved for protected health information. If you are a covered entity or business associate, do not paste patient-identifiable information into a consumer chatbot or an unapproved workplace tool. Use only systems your organization has reviewed and contracted for.

HHS states that a HIPAA covered entity or business associate may use a cloud service to store or process electronic protected health information only if it enters into a HIPAA-compliant business associate agreement with the cloud service provider and otherwise complies with HIPAA rules.[4] HHS also says a business associate contract must establish permitted uses and disclosures, require safeguards, require breach reporting, and address return or destruction of protected health information at termination.[5] In plain English, a privacy policy is not the same thing as a BAA.

OpenAI says its business-data commitments cover ChatGPT Enterprise, ChatGPT Business, ChatGPT for Healthcare, ChatGPT Edu, ChatGPT for Teachers, and the API platform, and that it does not train models on customer business data by default.[3] OpenAI also describes ChatGPT for Healthcare as part of a healthcare product set built to support HIPAA compliance requirements.[1] That does not mean every individual ChatGPT account is appropriate for PHI. Your organization still needs the right product, contract, settings, access controls, and internal policy.

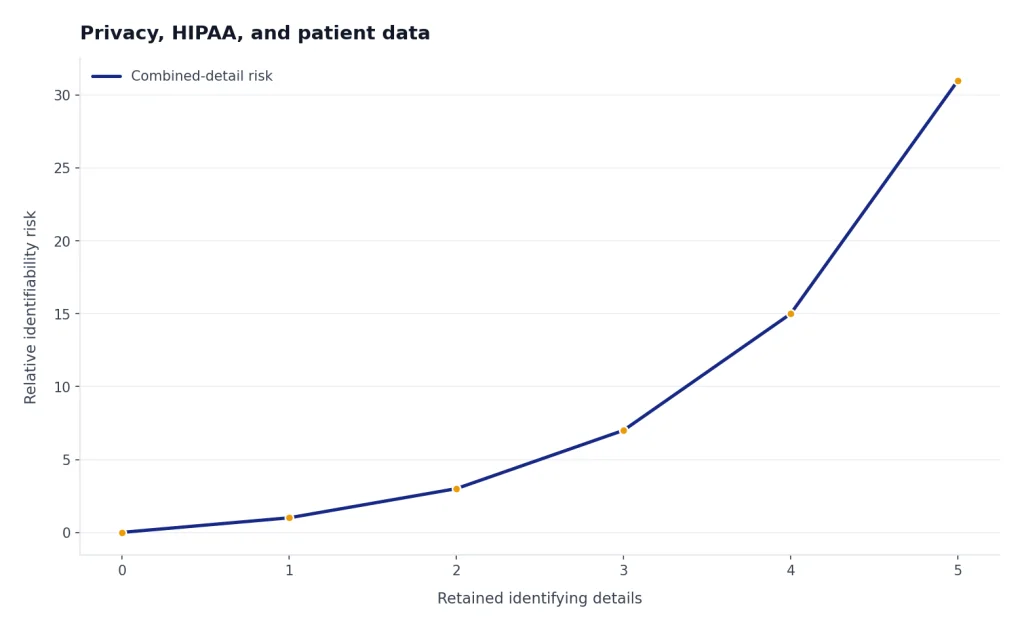

A safe default is simple: if a prompt contains names, dates of birth, addresses, medical record numbers, precise dates of care, unusual clinical details, images, portal messages, or any other identifiable information, treat it as PHI until compliance says otherwise. If you only need help with wording, remove patient identifiers and replace them with neutral placeholders.

- Use “a patient in their 60s” instead of a name and exact age when exact identity is not needed.

- Use “recent hospitalization” instead of admission and discharge dates when dates are unnecessary.

- Remove locations, employer names, school names, and family identifiers.

- Never paste lab reports, images, medication lists, or portal messages into an unapproved tool.

- Document which AI systems are allowed and which are prohibited.

Clinical judgment and safety limits

ChatGPT can produce confident language even when a response is incomplete, outdated, or wrong. That risk is not theoretical. A systematic review in npj Digital Medicine identified ethical concerns around large language models in medicine, including accuracy, responsibility, bias, privacy, and appropriate use in health-related research and care.[8] A randomized clinical trial published in JAMA Network Open studied how a large language model affected diagnostic reasoning, which shows why the field is evaluating these systems with clinical methods rather than assuming usefulness from fluency alone.[9]

Doctors should separate three categories of output. The first is language support: “make this discharge instruction clearer.” The second is information support: “summarize current guideline themes and list citations I should verify.” The third is clinical decision support: “what should I diagnose or prescribe?” The first category is usually low risk. The second requires source review. The third requires the highest caution and should be governed by institutional policy.

The FDA maintains a list of AI-enabled medical devices authorized for marketing in the United States and says the list is intended to help identify devices that have met applicable premarket requirements.[7] General-purpose chatbots are not automatically equivalent to FDA-authorized medical devices. If your workflow depends on AI for diagnosis, treatment recommendations, or patient management, involve compliance, legal, clinical leadership, and informatics before deployment.

A useful rule is “AI can widen the frame, but the clinician narrows the decision.” Ask it to surface alternatives, missing questions, patient education gaps, or documentation inconsistencies. Do not ask it to decide what you should do when the decision depends on examination findings, local formulary rules, current vitals, imaging, allergies, culture results, patient preferences, or a specialist’s plan.

Safer clinical-review workflow

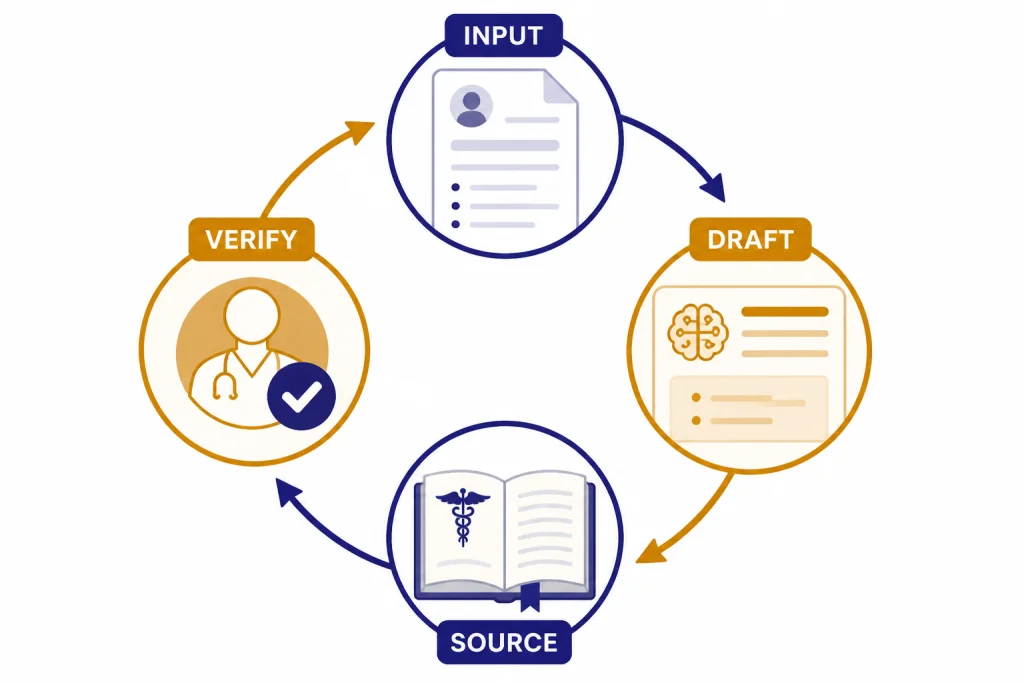

- Start with a de-identified or approved input.

- Ask for a structured draft, not a final medical order.

- Require uncertainty, contraindications, and red flags.

- Check the chart and primary sources.

- Edit the output in your own clinical voice.

- Record the final decision using your normal documentation standard.

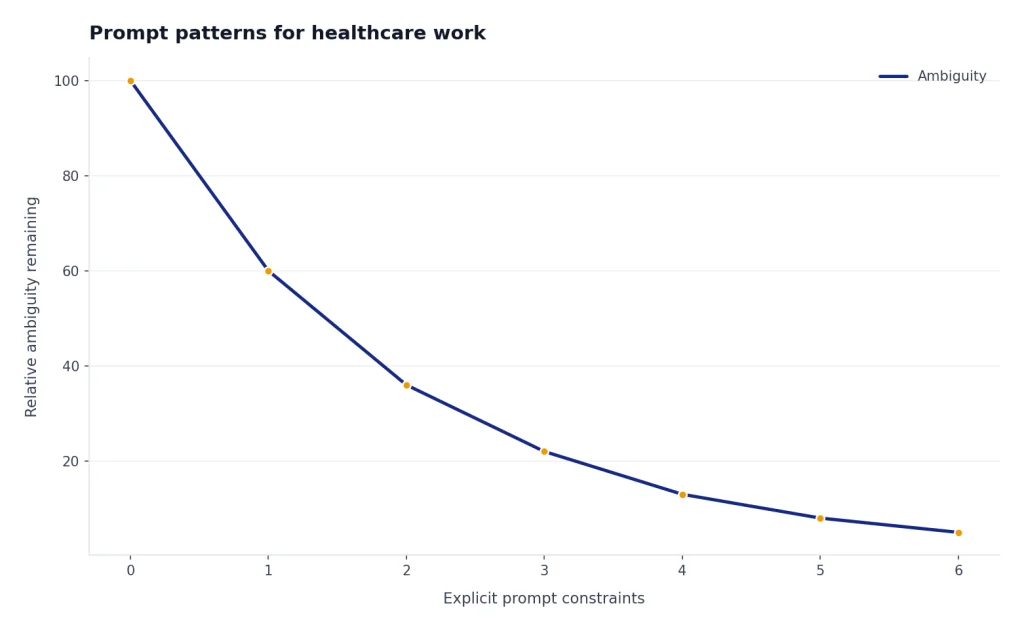

Prompt patterns for healthcare work

Good medical prompts are narrow, explicit, and safety-aware. They define the audience, output format, level of certainty, and review standard. Weak prompts ask for broad advice. Strong prompts ask for a draft that a clinician will verify.

Patient education prompt

Draft a patient-friendly explanation of [condition or procedure] at an 8th-grade reading level. Include what it is, why it matters, what the patient can do at home, when to call the clinic, and when to seek urgent care. Do not include patient-specific advice. Use a calm, direct tone.Discharge instruction prompt

Turn these clinician-approved bullet points into discharge instructions. Keep the language plain. Separate medication instructions, follow-up, activity, diet, warning signs, and contact instructions. Add placeholders where clinician-specific details are missing. Do not invent appointments, doses, or test results.Research triage prompt

I am reviewing evidence on [clinical question]. Create a structured evidence map with guideline sources to check, trial types to look for, outcomes that matter, likely contraindications, and questions to ask a specialist. Do not present conclusions as final. Flag areas where evidence may be uncertain.Differential diagnosis reflection prompt

Using only the de-identified information below, list diagnostic categories that a clinician might consider. For each category, list supporting features, features that argue against it, missing history questions, relevant exam findings, and tests a clinician may consider. Do not rank a final diagnosis.For multilingual patient communication, use the same verification standard you would use with any translation support. Our guide to ChatGPT for translators explains why bilingual review matters when tone and precision both matter. For patient handouts, the workflow also overlaps with ChatGPT for email writing: write plainly, remove ambiguity, and check the call to action.

For academic or evidence work, do not let a chatbot replace database searching, critical appraisal, or citation management. Use it to plan the review, not to certify the evidence. If you are building a literature workflow, compare this with ChatGPT for research.

Illustrative before/after example 1: de-identified patient education draft. The following is a sample workflow, not a recommendation for a real patient.

De-identified prompt:

Draft an 8th-grade handout for an adult patient who was told they have high blood pressure at a routine visit. Do not give patient-specific medication advice. Include home BP logging, lifestyle basics, when to call the clinic, and urgent warning signs. Leave placeholders for clinic phone number and follow-up timing.Example clinician-reviewed output:

Your blood pressure was higher than expected today. One high reading does not always mean you have long-term high blood pressure, but it should be checked again.

What you can do now:

- If your clinician asked you to check at home, write down your blood pressure readings, the date, the time, and whether you had caffeine, exercise, or stress beforehand.

- Choose lower-salt foods when possible, stay active as advised, avoid smoking, and limit alcohol if you drink.

- Bring your blood pressure log to your next visit.

Call the clinic at [clinic phone number] if your readings stay above the range your clinician gave you or if you have questions about your plan.

Seek urgent care or emergency help if you have chest pain, trouble breathing, weakness on one side, confusion, fainting, or a severe sudden headache.

Follow-up: [clinician to insert timing and instructions].Illustrative before/after example 2: de-identified portal reply draft. This shows how a clinician can constrain the output so the model drafts tone and structure without inventing care instructions.

De-identified prompt:

Draft a portal reply for an adult patient asking whether mild nausea after starting a new medication is normal. Do not name the medication, change the dose, or give a final medical decision. Ask the patient to confirm severity, timing, other symptoms, and whether they can keep fluids down. Include red flags that should prompt urgent care. Use a warm tone.Example clinician-reviewed output:

Thanks for letting us know. Mild nausea can happen with some medications, but we want to understand what you are experiencing before giving instructions.

Please reply with:

- When the nausea started

- How severe it is

- Whether you have vomiting, fever, abdominal pain, rash, dizziness, swelling, or trouble breathing

- Whether you can keep fluids down

- Whether you have taken any new medicines or supplements

Do not stop or change your medication unless our clinic tells you to do so. If you have trouble breathing, swelling of the lips or face, severe abdominal pain, repeated vomiting, fainting, or symptoms that feel urgent, seek urgent care or emergency help now.

A clinician will review your reply and advise on next steps.

Implementation checklist for clinics

A clinic should not adopt ChatGPT one doctor at a time with private rules. It needs a shared operating model. The goal is not to block useful tools; it is to make safe use easy and risky use difficult.

- Name approved tools. State which ChatGPT plan, enterprise workspace, API deployment, or healthcare-specific product may be used.

- Define PHI rules. Spell out when patient data may be used, when it must be de-identified, and when it is prohibited.

- Require human review. Make clear that AI-generated clinical content is a draft until a licensed clinician verifies it.

- Create prompt templates. Standardize common tasks such as discharge instructions, portal replies, referral summaries, and prior authorization letters.

- Track high-risk use cases. Review any use involving diagnosis, medication decisions, triage, behavioral health, pediatrics, pregnancy, oncology, or emergency care.

- Train staff. Teach clinicians, nurses, medical assistants, scribes, and administrators what they may and may not enter.

- Audit outputs. Periodically sample AI-assisted documentation for accuracy, tone, omissions, and inappropriate advice.

- Update policy. Revisit the policy when the product, contract, EHR integration, or regulation changes.

Teams that use structured spreadsheets for audits can adapt methods from ChatGPT for Excel. Teams building custom intake, routing, or documentation products should review OpenAI API pricing and procurement issues before assuming a consumer subscription is the right path. If you are creating a reusable prompt library, the same principles in our ChatGPT prompt generator guide apply: standardize, test, revise, and document.

The best implementation metric is not “how many clinicians used AI.” It is whether the tool reduced low-value administrative work without degrading accuracy, patient trust, privacy, or accountability. A time-saving draft that introduces one wrong medication instruction is not a productivity win.

Frequently asked questions

Can doctors use ChatGPT with patient information?

Only if the tool, contract, configuration, and organizational policy allow it. For HIPAA covered entities and business associates, patient-identifiable information generally requires an approved system and a proper business associate agreement when a vendor handles ePHI. When in doubt, de-identify the prompt or do not use the tool.

Is ChatGPT for doctors accurate enough for diagnosis?

Not as a stand-alone diagnostic authority. It can help generate possibilities, organize clinical reasoning, and identify missing questions, but the physician must verify the output against the patient, chart, exam, evidence, and local standard of care. Use it as a cognitive aid, not as the attending.

What is the safest first use case for a medical practice?

Start with low-risk, no-PHI drafting. Examples include generic patient education handouts, staff training outlines, call scripts for scheduling, and plain-language explanations that a clinician reviews. Avoid diagnosis, triage, medication changes, and patient-specific recommendations until governance is mature.

Can ChatGPT write prior authorization letters?

Yes, it can draft a prior authorization letter from clinician-approved facts. It should not invent history, failed therapies, dates, test results, payer criteria, or coding conclusions. A clinician, coder, or trained staff member should verify the letter against the chart, payer policy, and organizational billing rules before submission.

Should doctors disclose ChatGPT use to patients?

Follow your organization’s policy, state rules, and professional guidance. Disclosure is especially important when AI materially affects communication, documentation, or care decisions. Even when disclosure is not required for a simple drafting aid, clinicians remain responsible for the final content.

Can medical students and residents use ChatGPT?

They can use it for study plans, explanations, practice questions, and writing feedback if their program permits it. They should not use it to fabricate citations, bypass learning, or enter patient data into unapproved systems. Supervisors should teach verification habits early.